Anthropic's second code leak in one week exposed Claude Code's complete agentic harness architecture. Pattern recognition from surviving MCI/WorldCom and Windows vulnerabilities reveals this isn't an accident—it's the Norton moment for AI platforms. The 12-18 month execution window is open.

On March 31, 2026, Anthropic "accidentally" shipped 512,000 lines of Claude Code's internal source code to the public npm registry.

59.8 MB JavaScript source map. 1,906 files. Complete agentic harness architecture exposed.

Within hours, a security researcher posted the download link on X. 28 million views. Tens of thousands of GitHub forks. Over 8,000 DMCA takedowns that only accelerated distribution.

This is the second time Anthropic has leaked internal code in days.

I don't think it was a mistake.

I've seen this pattern before.

Faulty code shipped in monthly system update. I had to back out the entire system-level change at 2 AM. It didn't happen again because we implemented 2nd level QA with EDS.

One mistake? Human error.

Two mistakes in one week? Something else.

When Windows architecture became exposed, it didn't create "open innovation."

It created the spam/scam explosion.

Bad actors now had the blueprint.

Norton built a billion-dollar antimalware business because someone had to plug the hole.

Unix-based AI platform architecture now public.

Not just public — permanently distributed across decentralized networks where DMCA notices are meaningless.

The agentic harness that controls file system access, tool orchestration, prompt-injection defenses, workspace budgeting — all of it — now in the hands of threat actors.

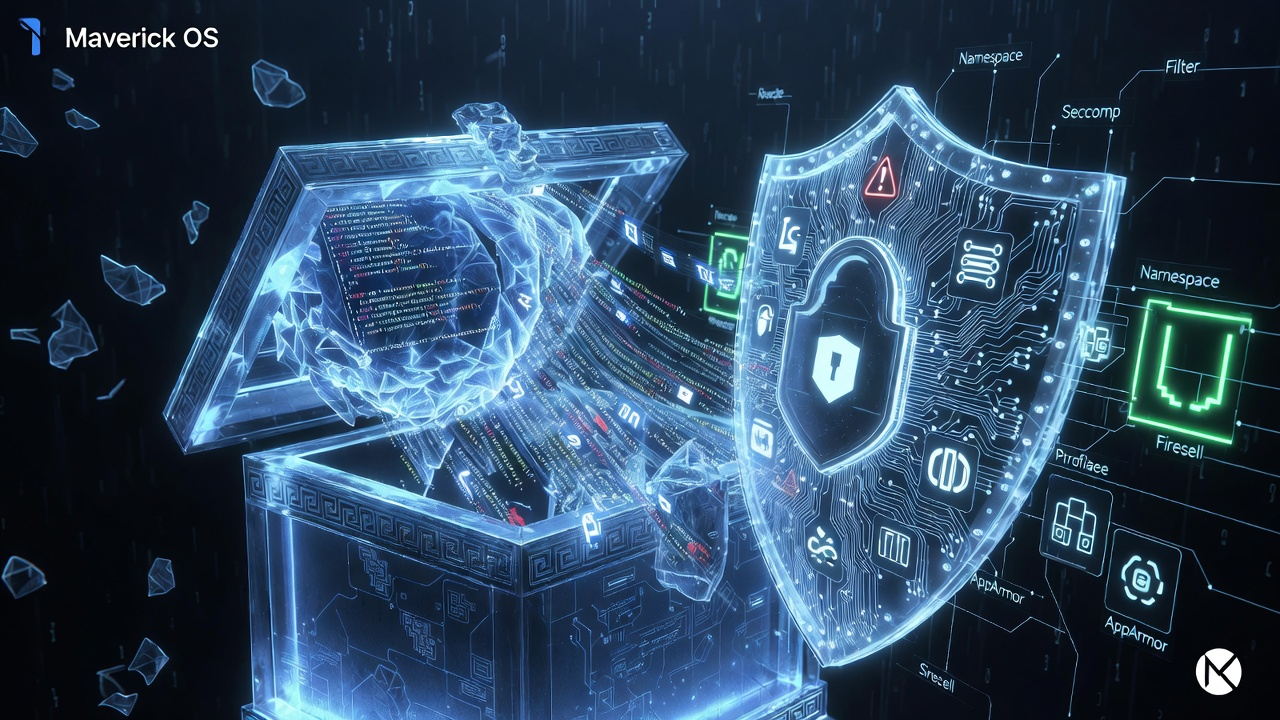

This is Pandora's Box.

And the AI Tsunami's second wave is about to hit.

Clean-room rewrites in Python and Rust already proliferating. Competitors reverse-engineering Anthropic's agent logic. AI coding tools flooding market at fraction of enterprise pricing.

Market impact: 12-18 months of Anthropic R&D just became community property.

The real crisis isn't "open source AI tools."

It's what happens when bad actors have production-grade blueprints for:

This is the npm supply chain contamination event UNIX administrators have been dreading.

Every developer workstation running Claude Code or its forks is now a potential attack surface.

Every CI/CD pipeline pulling from npm is at risk of subtle backdoors in "community-maintained" versions.

Every enterprise that deployed Claude Code faces immediate audit and compliance exposure.

The packaging error revealed Claude Code's internal "agentic harness" — the critical layer that manages how AI interacts with:

File System Operations: Complete visibility into how Claude Code reads, writes, and manipulates files in development environments. Security researchers now understand exactly how to exploit these patterns.

Tool Orchestration: The logic for how Claude Code decides which tools to use, when to use them, and how to chain operations together. Competitors can now replicate this decision-making architecture.

Prompt Injection Defenses: The very mechanisms designed to prevent malicious prompts are now exposed. Threat actors can study these defenses to craft targeted attacks that bypass them.

Memory and Token Management: How Claude Code manages context windows, compacts memory, and budgets computational resources. This knowledge enables optimization of attack payloads.

Workspace Budgeting: The rules governing what Claude Code can and cannot do within a development environment. Understanding these boundaries is the first step to breaking them.

This isn't a theoretical risk. It's a structural vulnerability that affects every layer of modern software development.

Millions of UNIX-based development environments rely on npm for package management. The Claude Code leak demonstrates that a single packaging error can expose proprietary AI infrastructure to permanent public distribution.

Similar oversights in other AI tooling packages or their transitive dependencies are now high-probability vectors.

Within 24 hours of the leak, the codebase was:

The material is permanently available. DMCA notices only accelerated distribution through the Streisand effect.

Anthropic's second major accidental disclosure in days signals systemic process weaknesses. For security-conscious UNIX administrators, this necessitates immediate review of all Anthropic-sourced packages and enhanced build-time artifact scrubbing.

Enterprises using Claude Code face audit and licensing exposure. The question is no longer "if" but "when" downstream exploits appear in production environments.

They're still reading headlines about "Anthropic embraces open source."

They think this is about innovation and collaboration.

They don't understand what just happened because they weren't there in the 1990s when Windows vulnerabilities spawned an entire security industry.

They didn't manage UNIX systems when one bad package could compromise an entire infrastructure.

They didn't live through MCI/WorldCom when "packaging errors" weren't errors at all.

Pattern recognition you can't buy.

Identify which teams are running Claude Code or community forks. Assess npm dependency exposure across all development environments. Map file system access patterns in current AI tool deployments.

Evaluate potential attack surfaces introduced by exposed agent logic. Review compliance implications for regulated industries (financial services, healthcare, defense). Assess vendor lock-in risks for AI development infrastructure.

Organizations face a choice:

The companies that move in the next 90 days will own the security category.

The ones that wait will be customers.

This is what synthetic intelligence predictive analysis looks like.

Not regurgitating press releases. Not summarizing tech blog commentary.

Connecting patterns across 45 years of surviving corporate disruptions to spot what's coming before the market sees the crisis.

While competitors celebrate "democratized AI," the real intelligence is recognizing:

The code cannot be recalled.

Bad actors now have the blueprint for exploiting Unix-based AI agent platforms.

Someone's going to build the security layer for this new attack surface.

The question is whether you'll be early or late.

Stop Reading. Start Seeing.

Crisis-to-Revenue Intelligence from M.A.P. — Maverick Advantage Platform. Pattern recognition from 45 years surviving AT&T's breakup, Navistar's collapse, MCI/WorldCom's implosion, Y2K, and the 2008 financial crisis.

Ready for the revenue playbook? Read the Revenue Opportunities Article →

Get weekly crisis-to-revenue intelligence delivered every Monday. Join M.A.P. →