The Claude Code leak creates three revenue opportunities: Norton for AI agents (12-18 month window), secure supply-chain tooling (6-9 months), and clean-room rewrite services (3-6 months). Execution priorities from someone who lived through Windows vulnerabilities spawning the antimalware industry.

The Claude Code leak isn't a crisis for everyone.

For security vendors, infrastructure providers, and enterprise consultants, it's a 12-18 month window to build the category that doesn't exist yet.

Someone's going to make hundreds of millions providing the tools enterprises need to survive the second wave of the AI Tsunami.

Here's how.

Execution Window: 12-18 months

Claude Code runs on Unix-based architecture. That code is now permanently public. The agentic harness that controls file system access, tool orchestration, and workspace operations is in the hands of threat actors.

Someone needs to build the security layer.

When Windows architecture became exposed in the 1990s, Norton built a billion-dollar antimalware business.

This is that moment for AI agent platforms.

AI-Specific Threat Detection for Unix-Based Platforms:

Enterprise-Grade AI Agent Hardening Platform:

Enterprises running any AI coding assistant: Claude Code, Cursor, Copilot, or any autonomous development tool.

DevSecOps teams: Managing developer workstation fleets where every machine is now a potential attack surface.

Government contractors: AI supply-chain sovereignty concerns just became mission-critical.

Regulated industries: Financial services, healthcare, defense — any organization that can't audit 512,000 lines of leaked code but still needs AI development tools.

Subscription tiers for enterprise fleets: $50-500 per seat per month depending on security requirements and deployment size.

Managed security services: 5-figure monthly retainers for continuous monitoring, threat detection, and incident response.

Government contracts: "Sovereign AI development environments" for defense and critical infrastructure. 6-7 figure annual contracts.

Integration partnerships: Revenue sharing with enterprise software vendors who need to secure their AI tool deployments.

You have 12-18 months before this becomes obvious and competitive.

Right now, most CISOs don't even know there's a problem. They're reading headlines about "open source innovation."

By Q4 2026, when the first major AI agent exploit hits production, they'll be scrambling for solutions.

The companies that build now will own the category. Late movers will fight for scraps.

Execution Window: 6-9 months

The npm registry just proved it's a single point of failure for AI development infrastructure.

Anthropic's "packaging error" exposed a systemic weakness: nobody's validating what ships in AI tooling packages.

Every AI infrastructure company is now paranoid. Every developer tools vendor is reviewing their release pipeline. Every enterprise platform engineering team is asking "could this happen to us?"

The answer is yes. And they need tools to prevent it.

Commercial CLI/Toolchain for Release Hygiene:

Pre-Publish Validation:

SBOM Generation and Tracking:

Continuous Monitoring:

AI infrastructure companies: Every single one is now reviewing their release process. First vendor with a turnkey solution wins.

Developer tools vendors: GitHub Copilot, Cursor, Replit — anyone shipping AI-powered dev tools needs this yesterday.

Enterprise platform engineering teams: Companies with internal AI tooling that can't afford a similar leak.

Open-source AI projects: Need credibility and security guarantees to compete with commercial alternatives.

SaaS subscription: $500-5,000 per month depending on organization size and package volume.

One-time security audits: $25K-100K per codebase for comprehensive release pipeline review.

Integration partnerships: Revenue sharing with GitHub, GitLab, npm for native platform integration.

Training and certification: Security hygiene workshops for development teams ($5K-15K per session).

6-9 months before GitHub/GitLab build this natively into their platforms.

First mover captures enterprise contracts and establishes the standard. Late movers become feature requests for the winner.

Execution Window: 3-6 months

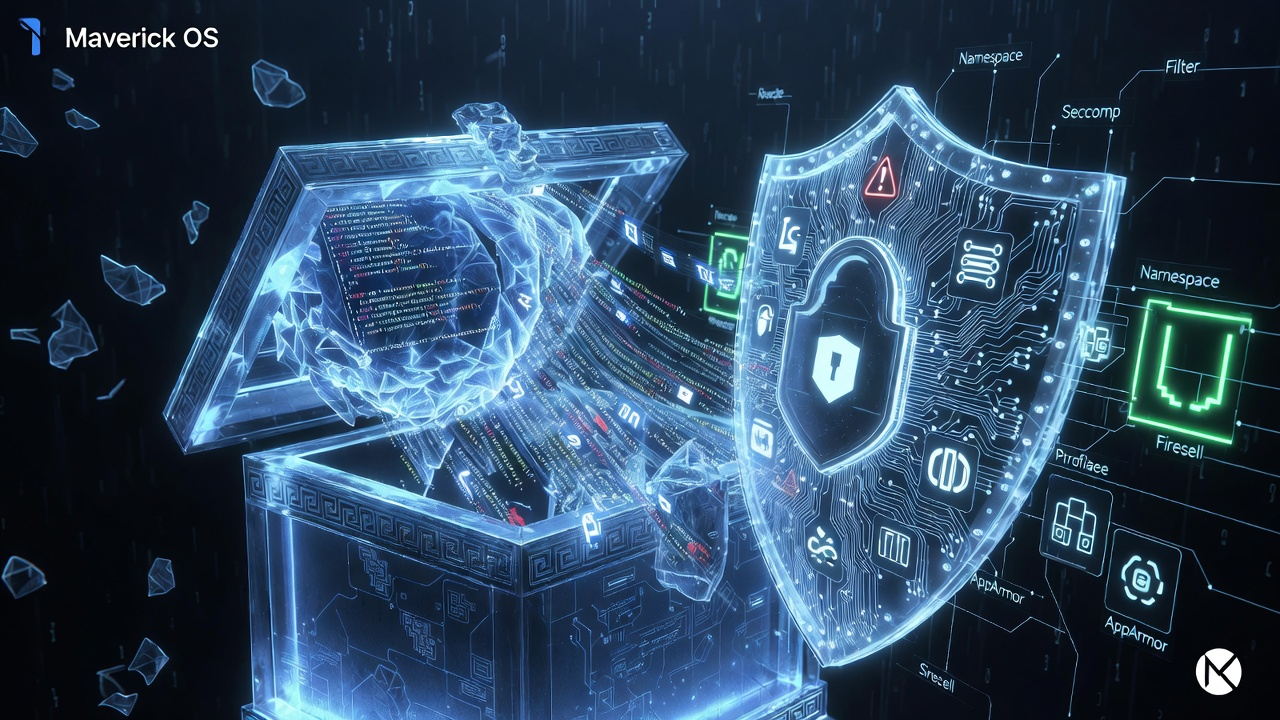

The code is out. It's not going back in the box.

Organizations now face an impossible choice:

There's a fourth option nobody's offering yet: Audited, performance-optimized rewrites with compliance guarantees.

Consulting Services:

Managed Services:

Compliance Packaging:

Fortune 500 companies using AI coding tools: Can't afford the reputational or compliance risk of exposed agent code.

Financial services firms: Regulatory compliance nightmare from using leaked code in production environments.

Healthcare organizations: HIPAA exposure from AI agents with unknown security profiles accessing patient data systems.

Defense contractors: National security implications of using compromised AI development infrastructure.

Legal and accounting firms: Professional liability concerns from using unaudited AI tools for client work.

Consulting engagements: $150K-500K per client for comprehensive audit, migration planning, and deployment support.

Managed hosting subscriptions: $10K-50K per month for secure, compliant AI development environments.

Annual compliance retainers: $100K-250K for continuous monitoring, security updates, and regulatory reporting.

Custom development: $500K-2M for bespoke clean-room implementations with organization-specific security requirements.

3-6 months before Big 4 consulting firms wake up and package this as "AI Supply Chain Risk Management."

You have maybe 90 days of true exclusivity before Deloitte, PwC, and EY launch competing practices.

Audit your own infrastructure:

Validate the opportunity:

Choose your play:

Build initial offering:

Launch and iterate:

Establish positioning:

Scale go-to-market:

Establish category leadership:

Most executives will spend the next 6 months debating whether this is actually a problem.

They'll form committees. They'll hire consultants to study the issue. They'll wait for "best practices" to emerge.

By the time they move, the opportunity will be gone.

Pattern recognition from someone who lived through:

This isn't theoretical. It's pattern repetition.

This is what synthetic intelligence predictive analysis delivers.

Not "here's what happened" summaries.

Not "here's what experts think" aggregation.

"Here's what's coming and here's how to profit from it before anyone else sees it."

While your competitors are celebrating "democratized AI," M.A.P. subscribers are executing on:

The box is open.

The code cannot be recalled.

Bad actors now have the blueprint for exploiting Unix-based AI agent platforms.

Someone's going to build the security layer for this new attack surface.

Someone's going to make hundreds of millions providing the tools enterprises need to survive the second wave of the AI Tsunami.

That someone should be you.

Stop Reading. Start Seeing.

Get weekly crisis-to-revenue intelligence delivered every Monday. Join M.A.P. →

Read the full crisis analysis. The Claude Code Leak: Pandora's Box Opens →

Revenue Intelligence from M.A.P. — Maverick Advantage Platform. Pattern recognition from 45 years surviving AT&T's breakup, Navistar's collapse, MCI/WorldCom's implosion, Y2K, and the 2008 financial crisis. Not a ChatGPT prompt.